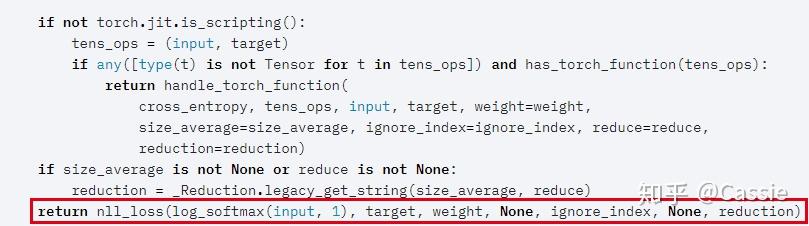

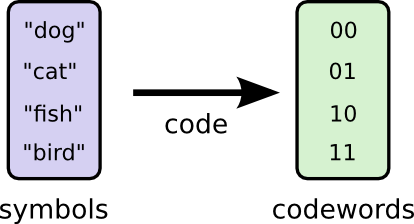

Criteria are helpful to train neural network on classical tasks. If provided, the optional argument weight should be a 1D Tensor assigning weight to each of the classes. It is useful when training a classification problem with C classes. It also has forward()and backward()methods for computing the loss and backpropagating gradients, respectively. class torch.nn.CrossEntropyLoss(weightNone, sizeaverageNone, ignoreindex- 100, reduceNone, reduction'mean', labelsmoothing0.0) source This criterion computes the cross entropy loss between input and target. Print("". Loss functionsare implemented as sub-classes of Criterion, which has a similar interface to Module. Test_losses.append(test_loss/len(test_loader)) Train_losses.append(running_loss/len(train_loader)) Test_loss += loss_function(log_ps, labels)Įquals = top_class.type('torch.LongTensor') = labels.type(torch.LongTensor).view(*top_class.shape)Īccuracy += an(equals.type('torch.FloatTensor')) Test_loader = DataLoader(dataset=test, batch_size=64, shuffle=True) torch.nn. Train_loader = DataLoader(dataset=train, batch_size=64, shuffle=True) Optimizer = optim.Adam(net.parameters(), lr=0.03) Y_train, y_test = y_train.astype('float32'), y_test.astype('float32') License GPL-3 Encoding UTF-8 RoxygenNote 7.2. X_train = x_train.reshape(-1, 1, dim, dim).astype('float32') / 255 Title An Implementation of Graph Net Architecture Based on 'torch' Version 1.0.0 Maintainer Giancarlo Vercellino <> Description Proposes a 'torch' implementation of Graph Net architecture allowing different op-tions for message passing and feature embedding.X_train, x_test = resize(x_train), resize(x_test) # because my real problem is in 60圆0  Image = omarray(image).resize((dim, dim)) It contains well written, well thought and well explained computer science and programming articles, quizzes and practice/competitive programming/company interview Questions. (x_train, y_train), (x_test, y_test) = mnist.load_data() Note: I reshaped the MNIST into 60圆0 pictures because that's how the pictures are in my "real" problem. To compute the element-wise entropy of an input tensor, we use torch. This version is numerically more stable than using Sigmoid and BCELoss individually. This class combines Sigmoid and BCELoss into a single class. The architecture is fine, I implemented it in Keras and I had over 92% accuracy after 3 epochs. BCE Loss tensor(3.2321, gradfn) Binary Cross Entropy with Logits Loss torch.nn.BCEWithLogitsLoss() The input and output have to be the same size and have the dtype float.I'm just looking for an answer as to why it's not working. I made a version working with the MNIST dataset so I could post it here. I'm doing a CNN with Pytorch for a task, but it won't learn and improve the accuracy.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed